Engineering Agent-First Workflows

A reflection from the Harvard Data Science Review Agentic AI 2.5 Week Intensive

This article follows directly from my previous piece, Why Agentic Systems Need Distinct Roles. That article argued that agentic systems fail when intelligence is treated as a single capability rather than a composition of responsibilities. This week’s work made that argument concrete. Instead of debating agent types in theory, the focus shifted to how distinct roles emerge from real operational use cases.

The work comes from a two-and-a-half-week Agentic AI intensive delivered by the Harvard Data Science Initiative. The emphasis is not on models or tooling, but on method. Specifically, how agentic systems are designed, structured, and governed inside real organisational workflows.

A significant amount of time was spent on use-case brainstorming, and that turned out to be the most constraining and useful part of the process. Rather than starting with what agents could do, the work began with where workflows actually break down. Slow investigations, fragmented context, implicit decision thresholds, reliance on individual experience, and repeated reconstruction of understanding during incidents. Each of these became a first-class use case, not a symptom to be patched later.

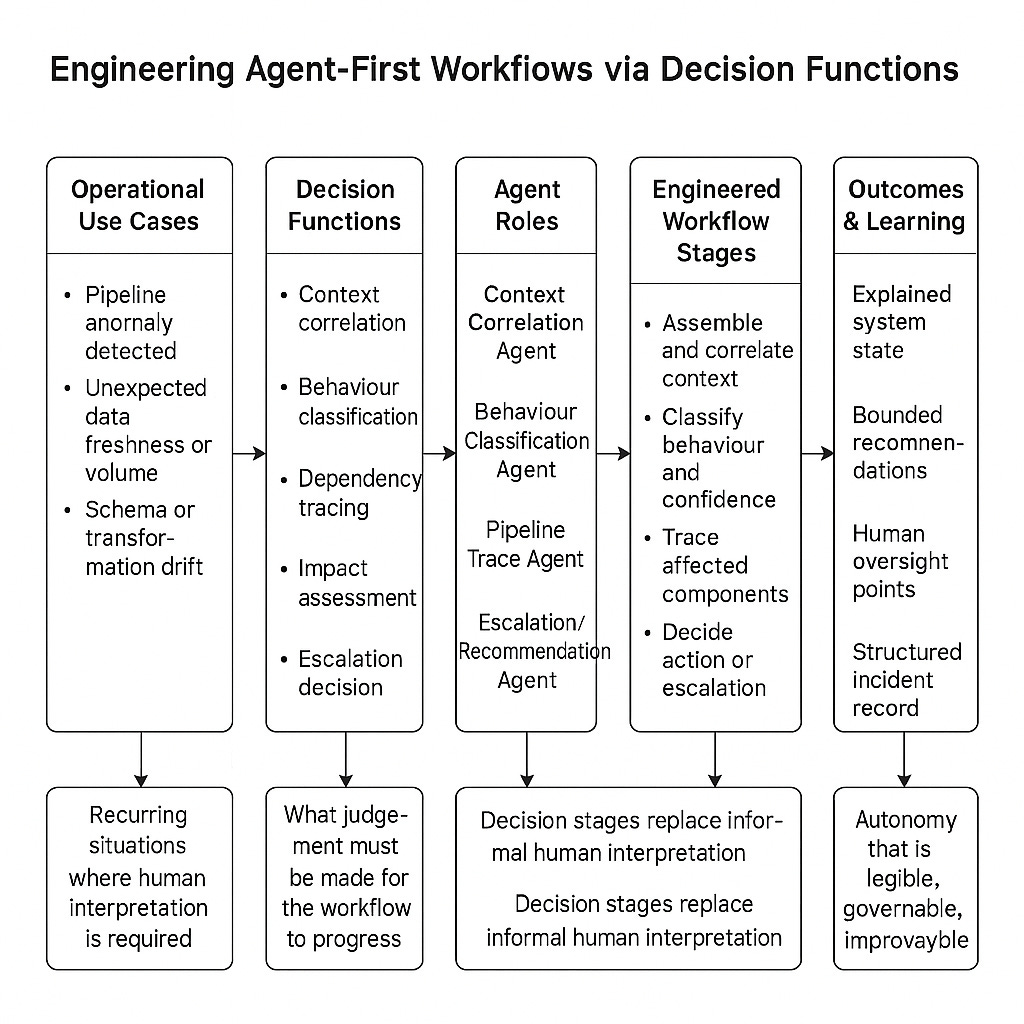

From there, each use case was analysed to identify the dominant decision function it required. Some problems are fundamentally about correlation, others about classification, tracing dependencies, assessing downstream impact, or deciding when escalation is warranted. Treating these as interchangeable is how teams end up with agents that act without understanding. The discipline of the exercise was in forcing separation. One use case, one primary decision function, one agent role.

The workflow I focused on was pipeline investigation and recovery in a data platform context. When pipelines behave unexpectedly, most of the time is not spent fixing anything. It is spent assembling context. Logs, metrics, schema changes, code deployments, historical incidents, and downstream usage all live in different places. The intelligence required at the start of the workflow is interpretive, not corrective.

That distinction reshaped how the Engineer phase of the AGENT method came into focus. Audit and Gauge are diagnostic. They surface how work actually happens, where judgement is applied, and where that judgement is costly or repeated. Engineering is where the analysis stops being descriptive and becomes normative. The question shifts from what happens today to what must be true for the system to act without constant human interpretation.

In practical terms, this meant redesigning the workflow around explicit decision stages rather than existing task boundaries. Context correlation became an engineered step. Behaviour classification became explicit. Tracing, impact assessment, and escalation were no longer informal activities performed by experienced individuals, but defined stages with clear inputs, bounded outputs, and confidence thresholds. The workflow was evaluated across representative operational scenarios to identify decision functions and map them to distinct agent types.

Agent prioritisation mattered. The sequence deliberately front-loads understanding before action. Context correlation first, then behaviour classification, followed by tracing and impact assessment, and only later root-cause validation, learning, and remediation recommendation. That ordering encodes a constraint. Autonomy without shared understanding is a liability, not a capability.

This is why the Engineer phase is not about adding AI to a workflow. It is about deciding what kind of system you are actually building. Making data accessible, decisions explicit, and success measurable is not documentation work. It is system design. If those elements are not engineered deliberately, autonomy becomes either unsafe or illusory.

Once the workflow is framed in terms of decision functions rather than tasks, the design work becomes concrete. Each agent has a bounded role, defined inputs, and a clear contribution to system understanding. Humans move from continuous interpretation to oversight and exception handling. Learning stops being a separate initiative and becomes a by-product of structured investigations that produce reusable artefacts.

This is also where the Engineer phase earns its place in the AGENT method. Earlier phases constrain what deserves to exist. Engineering determines what intelligence is allowed to act, and under what conditions. Navigation and tracking only work if decisions, data, and success signals have been engineered clearly enough to govern and measure. When those phases feel difficult later, it is usually because the engineering work was incomplete.

The core lesson from this week is simple but demanding. Agentic AI is built by turning recurring use cases into explicit decision structures, not by layering agents onto existing workflows. When those structures are engineered deliberately, autonomy becomes legible, governable, and improvable. That is the point where agentic AI stops being a concept and starts functioning as an operational system.